Hi. Can here. Today, we discuss privacy.

Privacy is Hip Now

Facebook wants in on the privacy action. Apple, though, is betting the farm on it. At least Apple says it is. You can have a cynical read on this. Maybe it is less about privacy, but rather kneecapping your competitors. I generally lean towards “privacy is good” camp, and think Apple wants to do the right thing. However, it’d be naive to argue that Apple does not want to make things harder for its rivals. You can be both good and ruthless.

Google wants to index the world’s information, but there are huge costs to this. You need to store the internet several times over, buy lots of servers, and have to hire people. Facebook… Look, I have followed the tech industry quite religiously for the last 10 years or so, but to this day, I don’t really know what drives Zuckerberg. He changes his tune often, and he’s really, and I mean really performative about it, which irks me personally. But he too wants to run a tech company on the internet. Fine.

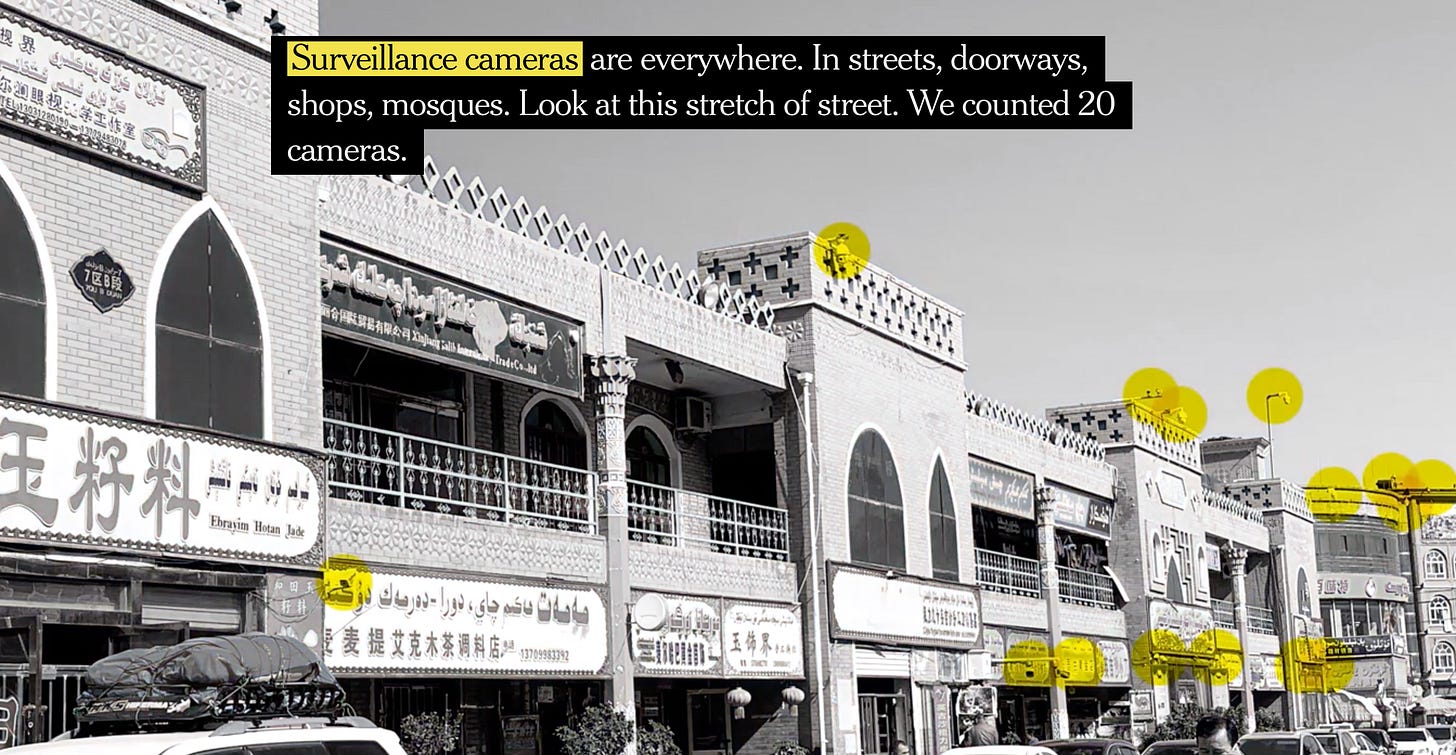

Either way, these companies need to make money. They do this largely by building dossiers of your online (and increasingly offline) activity and then selling advertisers access to your attention. There are some differences; Google observes you in your natural habitat as you go along your business. Facebook puts you in its walled garden with a bunch of spinning wheels to keep you occupied, while it surveils you. But the end result is similar. Track, sift, present.

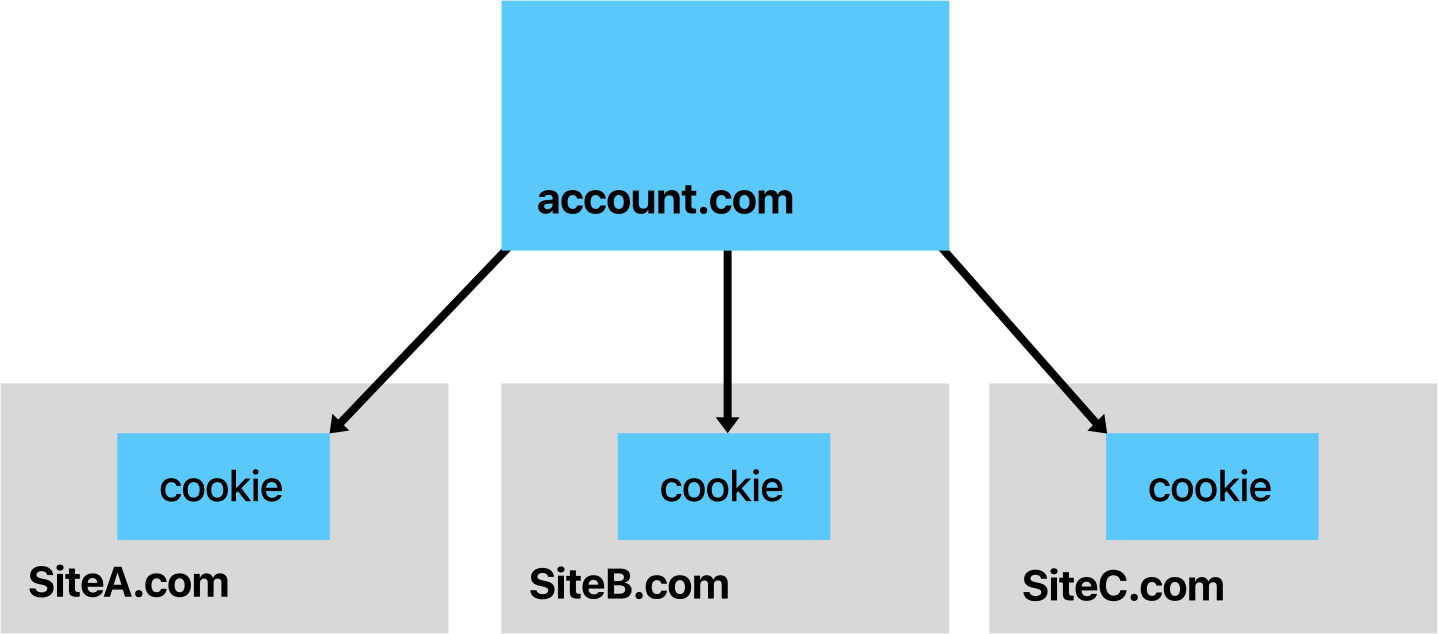

Apple especially doesn’t like this tracking part and it recently dialed up the pressure. On August 14, Webkit, the engine behind Safari, announced a new policy of aggressively blocking pervasive cross-site tracking online. The main principle is that the only people who should know anything about the user are the owners of the website user is visiting. If you are on the New York Times’ website, for example, New York Times should be able to observe you, but not necessarily other people.

What is Old is New

The aggressive change in tone sparked a bunch of responses. Google, without explicitly calling Apple out, defended its position and warned about an arms race between tracking and tracking prevention. Privacy researchers from Princeton University were not impressed by Google’s arguments. Careful Margins readers might have seen both takes linked last week.

Another response, which I had also linked, came from Ben Thompson at Stratechery. Thompson argues, in his usual eloquent and persuasive style that Apple is not just going to accidentally entrench the incumbents' (Facebook and Google) position, but is also throwing out the baby with the bathwater. He calls this “privacy fundamentalism”.

He further goes on to point to Apple’s fumbled handling of Siri recordings as a sign of their hypocrisy at best or being blinded by their fundamentalism at worst.

I am troubled by this framing. I find his examples cherry-picked, and his arguments are not well supported by historical evidence. Let’s take it step by step.

Nothing Fundamental about Fundamentalism

A big part of Thompson’s argument relies on a hypothetical that simply hasn’t borne out yet. WebKit team lists out a bunch of things that might break as they tighten cross-site tracking prevention. One item that stands out is federated logins, which Thompson uses for Stratechery. On the surface, it does look like he might have a case.

Maybe not. We just don’t know yet. If you actually check the policy, right after the part Thompson quotes, you’ll see that WebKit team opening a giant parenthesis about handling such cases:

However, we will try to limit unintended impact. We may alter tracking prevention methods to permit certain use cases, particularly when greater strictness would harm the user experience

A less acknowledged point in this tracking prevention debate is that what is now a detailed policy has been WebKit team’s modus operandi for a while now.

It might not have been as formalized, but these aren’t that new. Intelligent Tracking Prevention (ITP), which is the marketing term for the suite of technologies Apple uses to “intelligently” detect and quarantine or prevent cross-site tracking was announced more than two years ago.

The initial write-up explicitly calls out how federated sign-ons might be affected, and how ITP tries to handle those cases in a smart way. It notes some small fixes websites might need, but they are hardly onerous. ITP has been turned on by default for almost two years on iOS and Safari on Mac. The web continues to function.

Federated login is just one example and Thompson flags other potential fallout. Yet, even a cursory look at historical evidence shows that WebKit team is well aware of the gravity of their actions on the greater web.

Thompson also argues that Apple’s approach is too heavy-handed. Even third-party browsers like Chrome on iOS basically use the WebKit engine with a skin on top. This, he argues, is Apple throwing its weight around at the detriment of not just users, who’ll lose out on benefits of tracking, but also the industry as a whole.

Enjoy Being Watched?

Again, this argument doesn’t hold much water. As the previously mentioned researchers point out, users do not like to be tracked from site to site. People often talk about how it feels like Instagram is listening to their conversations, since Instagram’s targeting is so good. We know that’s not true. But people also talk about how the same ads follow them from site to site. That one is real; it’s a commonly employed advertising technique called retargeting. It is the technology that allows your activity on one site to affect the ads you see on another. Ad networks have turned the entire internet into one giant Hotel California.

As I mentioned before, while WebKit announcement had pizzazz, many of the technologies are already in todays’ browsers. You can opt-out (check out?) of most retargeting today by simply using Safari on your laptop, or disabling third-party cookies on Chrome.

The reality is that most of the tracking Thompson argues might break is not there to enable services like his, but to serve people targeted ads. Brushing this off, or sidestepping it entirely doesn’t do the discussion justice.

The adtech industrial complex, which has been complaining about Apple’s increasingly aggressive tactics for many (2015), many (2017) years. Yet, they have no one but themselves to blame. Large parts of the industry operate in shadows. The sheer complexity of the ecosystem hides the flow of not just money, but the data. Even most seasoned industry insiders cannot explain who collects what kind of data, how it gets combined with other sources, gets resold.

Advertising can be useful, and not all industries have to be simple enough for the layperson to decipher. Yet, it’s increasingly clear which way the winds are blowing. Apple might be the privacy poster child, but the regulatory and competitive landscape is shifting clearly to limit collecting data, and its uses around the world. Mozilla, whose anti-tracking policy inspired WebKit’s announcement, is rolling out a new version of Firefox that blocks third-party cookies by default today.

Lastly, Thompson points to Apple’s clumsy handling of Siri recordings. Even though he steers clear of calling Apple a hypocrite, he calls out Apple’s behavior driven by fundamentalism as opposed to pragmatism.

First of all, Apple issued a rare (well, increasingly less rare) apology on its handling of recording and promised sweeping changes. This isn’t as good as doing the right thing from day one, but it should clear the hypocrisy charges.

Does this, however, would mean that Apple is really doubling down on its fundamentalism, as Thompson would presumably argue, instead of looking for a pragmatic solution?

Privacy is Good

I believe that privacy is a fundamental human right. Moreover, I also believe that it’s a right that needs to be protected en masse, at a societal level, instead of on an individual level.

This means multiple things. One is that we need greater regulation that protects individuals by protecting the commons. It’s not just that individuals are woefully unequipped to understand and mitigate the risks but also that the risks brought on by pervasive hoarding of data affect more than those whose data is collected. In other words, if my data is collected, you might be at risk, but you don't have much say in what I do. Improvements and changes in this arena will be on the policy level.

The technology side can do more too. If we start with the base assumption that tracking is just a fact of life, we will not get anywhere. Yet, there’s no hard-written rule about how things need to work this way. Companies like Uber (my former employer) and Apple invest heavily in technologies like differential privacy that allow building features without having to build dossiers.

Moreover, being able to run more and more of the complicated logic on the client, instead of on the server, again proves the feasibility of building things that protect people’s privacy. We might not have the technologies today, but that’s no reason to think we will never have them.

Data as a Liability

A big theme of The Margins is the idea of data-as-a-liability. When I wrote that first piece, my argument was never that data doesn’t have value, but with it comes liabilities. The overarching principle is that we need to fully account for the pluses and minuses of data, and we only talk about the pluses today, while the negatives simply accumulate. Companies immediately benefit from the assets, but given the lack of teethy regulation, the risks are borne out by society when things go bad.

We are in such a crazy place right now that aiming for normalcy feels like fundamentalism. As Thompson notes himself, it’s easier for a tech company to track you than not to. We are so used to the pervasiveness and ubiquity of surveillance that we can’t imagine a world without it. The current state of the world might feel inevitable, as if things fell into place this way by some natural order. That, to me, is the scary thought.

But, I remain an optimist. I believe we can aim for a better world than today. We do need to aim for a more rational world, where decisions are made with data. But, we also need a not just philosophical framework to analyze the facts, but also need to acknowledge the technological status quo that shape our thinking. We still have the ability to change where things go from here.

What I’m Reading

Amazon’s Next-Day Delivery System Has Brought Chaos And Carnage To America’s Streets — But The World’s Biggest Retailer Has A System To Escape The Blame: Buzzfeed has a sweeping piece reporting on how Amazon has build its own delivery network—without building it. Lots of small companies whose entire revenues come from Amazon, trying to hit hyper-aggressive Amazon-esque delivery targets. Lots of accidents, lots of corners cut, yet Amazon isn’t in the chain of responsibility.

Amazon’s gargantuan delivery network was born after a notorious incident remembered in the corridors of the company’s Seattle headquarters as the “Christmas Fiasco” of 2013. As e-commerce began to boom and the holiday loomed, UPS and FedEx, which then delivered the bulk of Amazon’s packages along with the US Postal Service, were blindsided by the large volume of online orders and failed to deliver many parcels by December 25.

Furious, embarrassed, and determined that such an incident would never happen again, Amazon gave affected customers $20 gift cards while its executives hatched a bold and disruptive plan to free themselves from over-dependence on the large, established carriers. They would develop a network of small and midsize delivery companies to take over key routes, working directly out of special Amazon delivery stations, rather than UPS or FedEx facilities, and delivering packages according to Amazon’s own routing algorithms.

Vergecast: Is Facebook ready for 2020?: Verge’s Nilay Patel hosts Alex Stamos, former Yahoo! and Facebook Chief Security Officer and current Twitter-persona (and director of Stanford’s Internet Observatory) Alex Stamos. The transcript doesn’t do the entire interview justice. There’s a decent amount discussion on Facebook and election security and regulation. But the real meat is Alex and Nilay discussing the tradeoffs tech companies have to make between security and privacy, privacy and revenue, and many others. You should listen to the entire thing.

That was based upon a very traditional idea of what is government interference online. Malware account takeovers, suppression of dissidents. It did not include, you know, hot takes and edge-lording by people pretending to be Black Lives Matter activists who were actually in St. Petersburg. So a lot has changed ... That is now an entire kind of subfield of trust and safety being invented right now at places like Google, Twitter, and Facebook, and there are people whose entire job it is to do that.